Yet another great anecdote to Occam's razor. Eventually, we fell back on a more simplistic mapping algorithm. Unfortunately, this form of algorithm created a less-than-optimal interaction experience that did not work well with everybody. At the start, we accounted for the different heights of the user and allowed for a more adaptive system where the system would 'self zero' based on the user's height. Firstly, the mental model to control such interfaces is different for every users. In Kinetic Touchless 3.0, we had two main interactional challenges. With these two-dimensional data, we are able to remap these coordinates into input signals to control the interaction. The system encased in the conductor stand is able to pick up the left-to-right motion and the up-to-down motion of the user's hands. In Kinetic Touchless 3.0, the interaction has been upgraded to accept two-dimensional data derived from the user's hands. In the first two instalments, the interaction occurs on a one-dimensional level, where the user either moves their hand in-to-out or left-to-right in a linear motion.

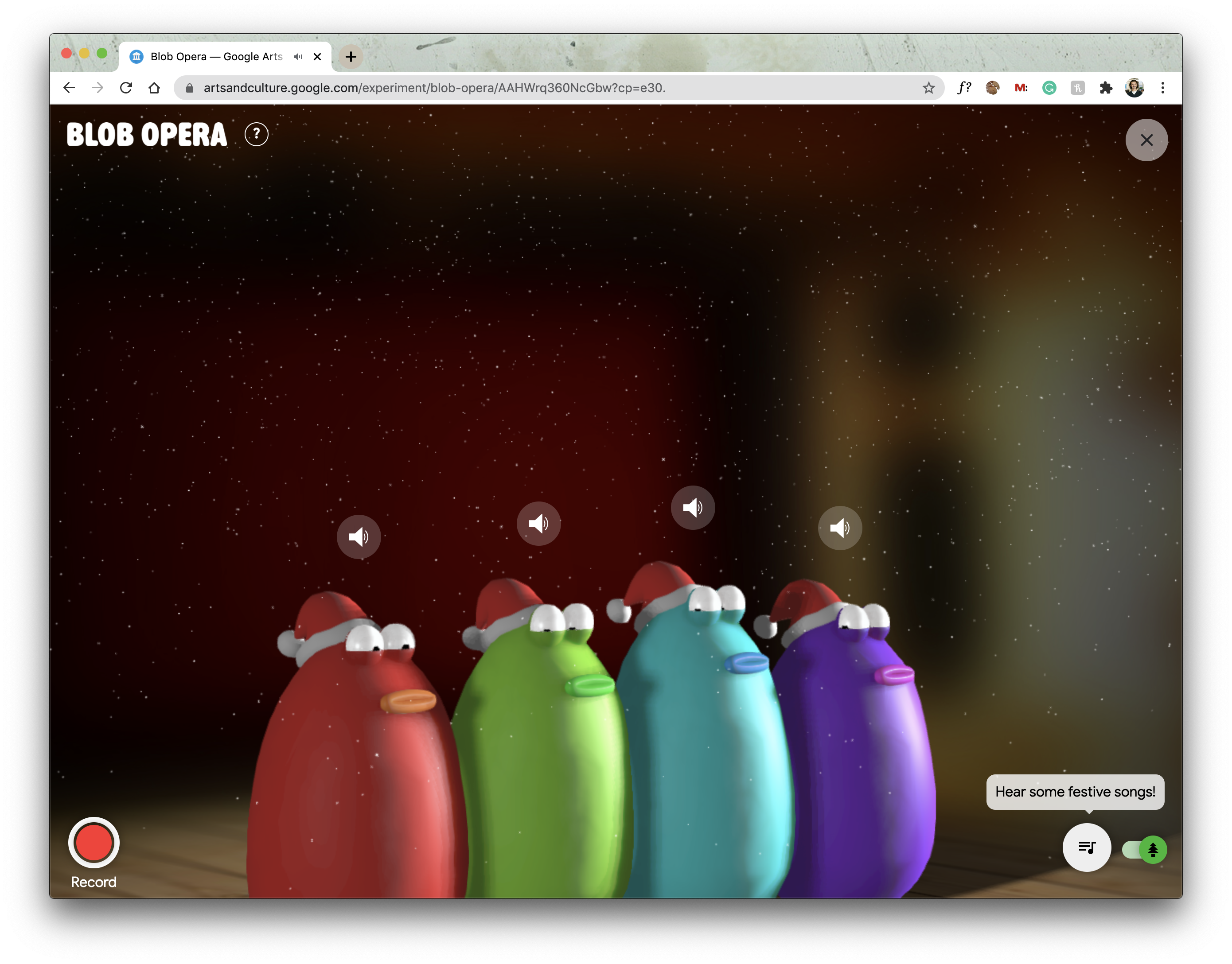

The main difference is the methodology used to sense and interpret the inputs. "Kinetic Touchless 3.0 retains the essence of its two predecessors – sensing the user's gestural input and providing a touchless interface to the said interactive touchpoint. Update September 20: Kevin Yeo from Stuck Labs has offered us a bit more insight into the project:

Kinetic Touchless 3.0 | Touchless Symphony for the Joy of Movement

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed